Category Archives: Web site strategies

A brief history of domain squatting

A long time ago at the dawn of the internet era, a tech journalist bought the mcdonalds.com domain name. Actually, “bought” isn’t really correct, because back then in order to obtain a dot com, you merely had to know how to send email to the right destination, and within days, the domain was all yours. That was how I first got my own strom.com domain back in 1993. Free of charge, even. It was the wild west. (Some may say it still is.)

The journalist was Josh Quittner, who was writing a story for Wired magazine about domain squatting, although it wasn’t glorified with an actual name back then. Josh noticed that the name wasn’t yet taken, so he tried to do the responsible thing and called a PR person at McD’s to try to figure out why they weren’t online and hadn’t yet grabbed it. Of course, back then, almost no one had gotten their names — Burger King didn’t yet obtain their own domain name, btw.

The PR person, bless their heart, asked, “Are you finding that the Internet is a big thing?” Yeah, kinda. As he wrote, “There are no rules that would prohibit you from owning a bitchin’ corporate name, trademarked or not.” So he grabbed the domain and refused to turn it over until McDonald’s agreed to provide high-speed Internet access for a public school in Brooklyn. Eventually, the company figured out that they really wanted the domain for their own business, and domain squatting has never been the same since then.

Domain squatting now has a wide and varied subculture. Here is a 2020 report from Unit42 that goes into further details, for example.This includes homographic attacks (using non-Roman character sets), combo squats (that add subdomains to make them appear more legit), level squats (using a very long character string, counting on the browsers to truncate them and make them more believable) and I am sure many more perfidious techniques.

A complicating factor is we now have all kinds of domains like .xyz and .lawyer to contend with, which only increases the threat space that bad actors can occupy in domain impersonation. Josh emailed me today and said, “I figured that with the creation of so many top-level domains the shenanigans around domain-name squatting would abate but it just created loads of new problems. For instance, some scammers pretending to be decrypt.co (my crypto news site) created a mirror site with a very similar name and used it for phishing. They periodically send out email to millions of people claiming to be decrypt and urge people to connect their crypto wallets to collect tokens. Emailing the host site and alerting them to the scam did no good.” Yeah, wild west indeed.

I was reminded of this story when I saw that yet another business had let their domain registration lapse and was purchased by tech consultants who are trying to give it back to its rightful owner. Why do these things happen? One major reason: domain ownership ultimately relies on humans to pay attention, and renew them at the right times. (I just renewed a bunch of mine, which I had wisely setup years ago to expire on January 1.) Also, in big companies like McDonalds there may be several different domain owners spread among various departments, especially if a company has been acquired or has created new subsidiaries.

Actually, there is a second reason: greed. Criminals have adopted these squatting techniques to lure victims in. Just a few days ago I thought I was buying some stamps from USPS.com, but was brought to some other domain that looked like them. You can’t be too careful. (And the USPS doesn’t discount their stamps by 40%, which should be a red flag.)

SiliconANGLE: State data privacy laws are changing fast – here’s what businesses need to know

With no federal data privacy law on the books, states are doubling down on new laws governing the protection of people’s data.

In the past year, Delaware, Indiana, Iowa, Montana, Oregon, Tennessee and Texas have all enacted such laws, more than doubling the number states had them previously — those being California, Colorado, Connecticut, Utah and Virginia.

Although that represents progress, it’s also a challenge for companies doing business nationally to keep track of the subtle differences among the various laws. My analysis for SiliconANGLE here.

Scott Helme and Probely join forces on SecurityHeaders.com

A well-known security tool, SecurityHeaders.com, is now part of many services that Probely offers. The company has a full range of web application and API vulnerability scanning solutions. That news story hides the history and importance of the union and its principal, Scott Helme. I had an opportunity to talk to him directly and find out what led to the change.

For those of you that aren’t familiar with Security Headers, it is a free website that can test your own site for weaknesses in various HTTP protocol and web policy implementations. Helme launched the site in 2015 after an experience testing his own home broadband router that could result in a compromised network. “I was just a guy with a hobby doing security research,” he told me recently. That led to a series of well-publicized other hacks, such as on the computers onboard the Nissan Leaf cars that he investigated with Troy Hunt. He also did some live hacks on TV of audience members’ equipment.

Since it was launched, the site has done 250M website scans.

Helme has worked with Probely since the company became a sponsor two years ago. “By joining forces with Probely, I’m incredibly happy that Security Headers will remain stable and viable for years to come!” said Helme. The union was designed for the site to be more sustainable and to leverage more resources, since until now it has been solely his own labors.

Helme’s goal with Security Headers was to make information security more comprehensible and actionable for the average person. That is why the site, and other tools that he offers, are all free and open. That will continue under the new regime at Probely. “I’ve put so much thought into it, working with these people, what they do, how they do it, and how they align with what I do,” he said. “We have a lot in common.”

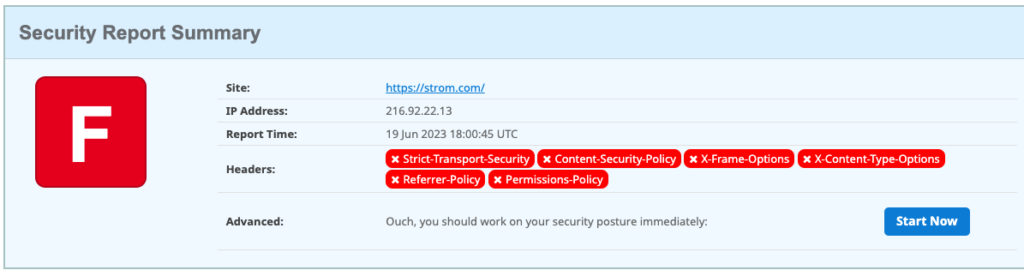

So I decided to try it out for myself, and I was quite surprised. I have had a website for almost 30 years, and while I knew about the Security Headers site never actually did a scan. Here are my results:

Pretty miserable, right? I basically failed every one of Helme’s six tests. But I was in good (or bad) company: about half of those 250M scans also resulted in an “F” grade.

So — I have a lot of work to do. The results page doesn’t just show the failures, but also provides links to content from Helme on how to learn more about these protocols and policies and what I need to do to fix them to get a better grade — and improve my site’s security. For example, the page links to improvements in hardening my response headers, doing a better job of defining my content security policies and implementing strict transport security protocols. The content is based on numerous talks that Helme has given (and will continue to give) over the years and is written clearly with copious code examples too.

But here is my dirty not-so-secret: I have zero experience with setting up website header parameters. This is probably the reason why my site received a failing grade. After years — decades — of experience setting up various web servers, I have never touched the header configurations of any of my servers. Back in the early days of the web, these parameters didn’t exist. So I can cut myself a little slack. But really, I should have known better, after all the stuff that I write about infosec down through the years. But that is one of the reasons why I try to be as hands-on as I can, and now I have some work to do and things to learn.

That is the essence of what he and Probely are trying to do — to teach us all how to have more secure sites.

(Note: this post is sponsored by Probely but is independent editorial content.)

Who killed Shireen?

The killing of Al Jazeera veteran journalist Shireen Abu Akleh last week has haunted me in the days since it happened. She was covering a raid by Israeli forces in the refugee camp of Jenin in the West Bank. For specifics about what happened, I would urge you to read Bellingcat’s analysis.

The killing of Al Jazeera veteran journalist Shireen Abu Akleh last week has haunted me in the days since it happened. She was covering a raid by Israeli forces in the refugee camp of Jenin in the West Bank. For specifics about what happened, I would urge you to read Bellingcat’s analysis.

The Israelis initially said she was killed by Palestinians, then changed their story to say they weren’t sure who actually fired the fatal shot. Various other sources, including representatives of the Palestinian government and various Al Jazeera reports that have aired in the past week, claim it was Israelis, and done deliberately. These reports state that a sniper took careful aim at Shireen because she was wearing body armor and a helmet. The single shot hit her head just below her ear, which wasn’t protected.

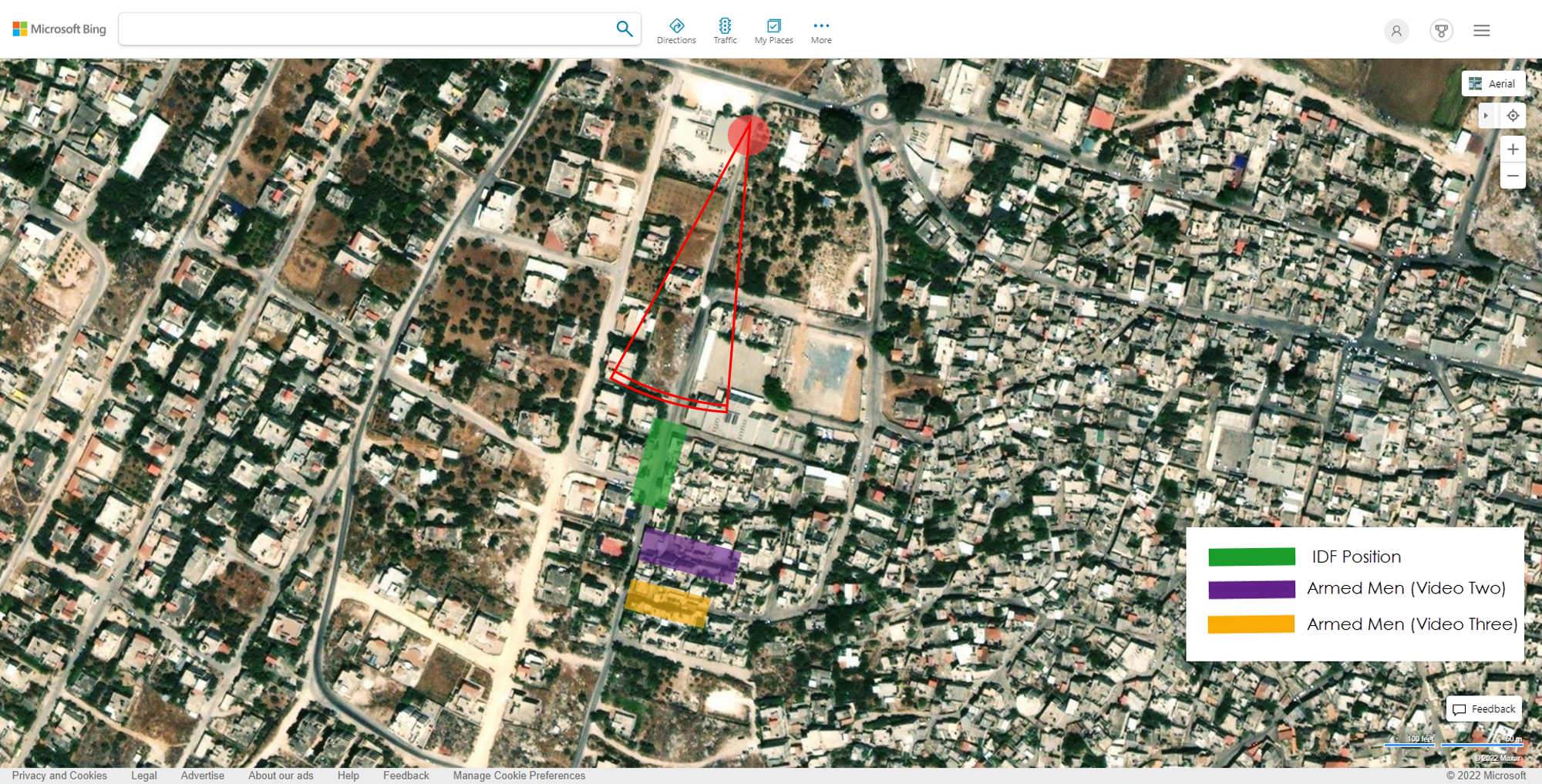

The map shows her position (the red dot at the top), as well as the positions of Army forces and Palestinians. Both groups were similarly armed with M4 assault rifles using the same ammunition. I’ll get back to this in a moment.

What is even sadder about the circumstances surrounding Shireen’s death are the circumstances around her funeral. There were additional clashes with the Israeli army and police at both the hospital morgue and the church where her services were held. I am not going to link to the video clips but let’s just say it is pretty clear that “clashes” is probably not the best descriptor. Tensions and emotions were high, and it was ugly.

There have been 19 journalists killed in Israel (including Gaza and the West Bank) over the past two decades. That link will take you to the Committee to Protect Journalists — two other people have been killed there without confirmed motives. What makes this more personal for me was first, Shireen was a dual American/Palestinian citizen and a journalist. She is buried in the Christian cemetery that I visited three years ago when I was searching for the grave of Oskar Schindler. The route that took her to the cemetery is one that I have frequently walked over my many visits to the city. Finally, I have seen numerous reports of hers over the years that I have watched the Al Jazeera English channel, and admired her reporting and how often she was in the line of conflict. She was amazingly courageous.

Now, figuring out the origins of that bullet aren’t going to be easy. The Israelis and Palestinians don’t want to work together, but to have a definitive answer means you need to test the guns that were used that day. Some of them have been collected, but the chain of custody is probably broken on both the bullet and the weapons. Never has a single bullet carried so much weight since that November day in Dallas when JFK was killed.

I mourn Shireen’s death greatly.

Retaining my back catalog

Taylor Swift and I have something in common: we both are having trouble retaining our back catalogs. In her case, she is busily re-recording her first six albums since the originals are now under the control of a venture-backed investment group. In essence, she is trying to devalue her earlier work and release new versions that improve upon the recordings. In my case, I am just trying to keep my original blog posts and other content available to my readers, despite the continued effort by my blog editors to remove this content. Granted, many of these posts are from several years ago, back when we lived in simpler times. And certainly a lot of what I wrote about then has been eclipsed by recent events or newer software versions, but still: a lot hasn’t. Maybe I need to add more cowbell, or sharpen up the snare drums. If only.

I realize that many of my clients want to clean up their web properties and put some shiny new content in place. But why not keep the older stuff around, at least in some dusty archive that can still receive some SEO goodness and bring some eyeballs into the site? Certainly, it can’t be the cost of storage that is getting in the way. Maybe some of you have even done content audits, to determine which pieces of content are actually delivering those eyeballs. Good for you.

Although that link recommends non-relevant content removal, which I don’t agree. I think you should preserve the historical record, so that future generations can come back and get a feel for what the pioneers who were making their mark on the internet once said and felt and had to deal with.

Some newspaper sites take this to the extreme. In July 2015, the venerable Boston Globe newspaper sent out a tweet with a typo, shown here. Typos happen, but this one was pretty odd. How one goes from “investigate” to “investifart” is perhaps a mystery we will never solve, but the Globe was a good sport about it, later tweeting, “As policy we do not delete typographical errors on Twitter, but do correct#investifarted…” Of course, #investifarted was trending before long. The lesson learned here: As long as you haven’t offended anyone, it’s ok to have a sense of humor about mistakes.

Some newspaper sites take this to the extreme. In July 2015, the venerable Boston Globe newspaper sent out a tweet with a typo, shown here. Typos happen, but this one was pretty odd. How one goes from “investigate” to “investifart” is perhaps a mystery we will never solve, but the Globe was a good sport about it, later tweeting, “As policy we do not delete typographical errors on Twitter, but do correct#investifarted…” Of course, #investifarted was trending before long. The lesson learned here: As long as you haven’t offended anyone, it’s ok to have a sense of humor about mistakes.

Both Tay and I are concerned about our content’s legacy, and having control over who is going to consume it. Granted, my audience skews a bit older than Tay’s – although I do follow “her” on Twitter and take her infosec advice. At least, I follow someone with her name.

I have lost count on the number of websites that have come and gone during the decades that I have been writing about technology. It certainly is in the dozens. I am not bragging. I wish these sites were still available on something other than archive.org (which is a fine effort, but not very useful at tracking down a specific post).

I applaud Tay’s efforts at re-recording her earlier work. And I will take some time to post my unedited versions of my favorite pieces when I have the time, typos and investifarts and all.

In any event, I hope all you stay healthy and safe this holiday season.

FIR podcast episode #151: How Akamai rebuilt its website and drove customer engagement

Few of us get to have as much influence over a more public website than Annalisa Church, VP Digital Technology, Insights & Operations for Akamai. She has built a career on converging marketing and technology to drive better experiences for customers and build long-term value for enterprises. She is devoted to transforming marketing into a data-driven organization through actionable insights and ensuring the voice of the customer. Prior to Akamai, she worked for eight years in Dell’s marketing department.

Annalisa recently led a massive overhaul of the Akamai website, which is available in nine different languages, with more than 1,200 pages in English covering 18 different products. The site has tremendous customer engagement, with one million monthly visitors, and almost two-thirds of them become customers after visiting the site.

The diagram below shows some of the changes that Church implemented during her redesign to make it more effective and more relevant to visitors. These efforts have paid off in terms of more engagement, more conversions from visitors to customers, and wider impact.

Listen to our podcast here:

How one startup team has created five successful exits

It is an origin story that has been told numerous times: a group of computer nerds meets in college and goes on to build a software startup, eventually selling their company. But this is a story with a twist: four of the team members met more than 20 years ago when they were undergrad engineers at Carnegie Mellon University. Together with a fifth team member they would go on to have five different and successful exits at various tech startups.

The team includes Peter Pezaris (CEO and developer), David Hersh (product manager), James Price (devops), Michael Gersh (marketing/analytics) and latecomer Claudio Pinkus (who joined the others 13 years ago).

Their projects included:

- Codestream, a devops collaboration platform which was founded in 2017. Earlier this summer, NewRelic announced they were acquiring the company this week.

- Glip.com, a team collaboration platform acquired by RingCentral in 2015.

- Multiply.com, a social commerce platform acquired by Naspers in 2010.

- Commissioner.com, one of the first online fantasy sports platforms, which was acquired by CBS/Sportsline, and

- Ask.com, acquired by IAC in 2005.

What is intriguing about the group is how well they worked together in these various companies. The group eventually settled into specific roles – Pezaris has been the CEO and chief coder in the last three ventures – and have worked from different locations well before the pandemic made remote work the norm for many of us.

Because the team has been geographically remote, they have tended to focus on building better collaboration tools over the years as they built their various enterprises. This is most obvious with their latest venture, Codestream, a free open-source implementation which allows developers to collaborate on writing code. It works with integrated development environments (IDEs) JetBrains, VS Code and Visual Studio and supports pull requests from GitHub/GitLab and BitBucket and integrates with other code management and messaging tools such as Jira, Slack and Asana.

That is a lot of integration, but the goal was to provide a richer request interface and annotation mechanism to make code reviews more meaningful, and to check out and run builds more quickly. Prior to Codestream, software engineering teams couldn’t easily talk to each other, particularly if they were using different IDEs or code editors and particularly if they wanted to comment on each other’s code in near real-time. The idea for the company was born out of doing cumbersome things, such as taking screenshots of one’s code and then sending it as an attachment in Slack. “This was a natural progression for us to applying messaging to our own daily use case,” said Pezaris. “We wanted to connect coding teams that don’t use the same IDE, especially if they are a larger team. There is always some odd person who is using a different editor.”

One of the notable differences with the team is how they used the Silicon Valley Y Combinator pitch competition to focus their business strategy. “The application process forces you to address how to turn your project into a real business, and by answering the questions it will get you to think more carefully about your market and customer acquisition,” said Pezaris. The team was frustrated because two of their past companies (Multiply and Glip) were precursors of Facebook and Slack respectively, both of which had done better jobs of integrating with Silicon Valley culture and of course had much better market positioning.

This shows that while a great idea can form a solid foundation for a startup, you need more than just the idea and some snappy app. You must put together how to create a business too. The Y Combinator competition brought the Codestream team to this next level.

Initially, they applied to the competition on a whim and almost didn’t finish their application in time. Pezaris told me that he was working on their final piece of their application, to create a one-minute video clip explaining their company. He was working on it in an airport hours before the deadline, and literally uploading the file as he was walking down the jetbridge to board his flight. They were accepted in the winter 2018 class. “While there is a very low chance you will be accepted, it is a golden ticket,” he said.

Another notable aspect of Codestream was how the company was founded on creating an open source offering. There are numerous success stories of other open source efforts that have been acquired by Red Hat, Oracle, and Microsoft, showing that this can sometimes be a pathway towards success.

Finally, the team never anticipated their eventual suitors going into each project. Multiply, for example, was bought by a South African media company that was interested in expanding to customers in emerging markets. “Each acquisition has been very different, and we have tried to stay on after the deal has closed. Some of the acquisitions brought up red flags that turned out to be nothing burgers, but the important point is that we all went through them together,” Pezaris said.

Avast blog: The importance of equitable and inclusive access to digital learning

Schools continue to remain closed around the world. A UNICEF analysis last summer found that close to half a million students remain cut off from their education, thanks to a lack of remote learning policies or lack of gear needed to do remote learning from their homes. And as UNICEF admits, this number is probably on the low side because of skill gaps with parents and teachers to help their kids learn effectively with online tools.

While the situation has improved since last year and more kids are back in their actual classrooms, there are still critical gaps in math and reading skills and a wide disparity when country-wide data is compared. The equity/inclusion problem isn’t exactly new, but the pandemic has focused awareness and foreshadowed the obstacles. I discuss this in my latest blog post for Avast here.

Telegram designs the ideal hate platform

Last week the Parler social network went back online, after several weeks of being offline. Its return got me thinking more about what the ideal hate platform is. I think there are two essential elements: the ability to recruit new followers to hate groups, and the ability to amplify their message. The two are related: you ideally need both. Parler, for all the talk about its hate-mongering, really isn’t the right technical solution, and I will explain why Telegram has succeeded.

This blog post comes out of email discussions that I have had with Megan Squire who studies these groups for a living as a security researcher and CS professor. She gave me the idea when we were discussing this report from the Southern Poverty Law Center on how Telegram has changed the nature of hate speech. It is a chilling document that tracks the rise of these groups over the past year. But the SPLC isn’t the only one paying attention: numerous other computer science researchers have tracked the explosive growth in these pro-hate groups since the Capitol January riots and other seminal events in the hate landscape.

Telegram’s rise in numbers doesn’t tell the complete story. Telegram has crafted a more complete social platform for distributing hate speech and recruiting new followers. Certainly, Facebook still has the largest user base, but their tech hate stack (if you want to give it a name) is nowhere near as well developed as Telegram’s, and Parler’s is a distant third. Compare the three networks below in terms of both amplification and recruitment elements:

| Criteria | Parler | Telegram | |

| Type of service | Microblog | Social network | Messaging+ |

| Coherent and transparent reporting process for hate speech | No | Mostly and improving | No |

| Support email inbox | No | Yes | No |

| Content moderation team | It depends | Yes | It depends (see below) |

| Appeals process | Yes | Yes | No |

| Encrypted messaging | No | Separate app | Built-in |

| Corporate HQ location | USA (for now) | USA | Dubai |

| Growth in English-speaking hate group followers | Unknown | Unknown | Huge growth (SPLC report) |

| Group cloud-based file storage | No | No | < 2 GB |

| Group-based sticker sets | No | No | Yes |

| Bot infrastructure and in-group payment processing | No | No | Yes |

“Telegram is absolutely the platform of choice right now for the harder-edged groups. This is for technical reasons as well as access/moderation reasons,” says Squire. You can see the dichotomy in the table above: most of the moderation features that are (finally) part of Facebook are nowhere to be found or are implemented poorly on Telegram, and Parler is pretty much a no-show. Telegram’s file-sharing feature, for example, “allows hate groups to store and quickly disseminate e-books, podcasts, instruction manuals, and videos in easy-to-use propaganda libraries.” I have put links in the chart above to descriptions on why the bot infrastructure and sticker creation features are so useful to these hate groups.

What about moderating content? Here we have conflicting information. I labeled the boxes for Parler and Telegram as “it depends.” Telegram has said that their users do content moderation. In their FAQ they claim to have a team of moderators. For Parler, their community guidelines document says in one place that they don’t moderate or remove content, and in another that they do. My guess is that they both do very little moderation.

The picture for Parler is pretty bleak. If they do succeed in keeping their site up and running (which isn’t a foregone conclusion), they have almost none of the elements that I call out for Facebook and Telegram. Using the Twitter micro-blogging model doesn’t make them very effective at amplification of their messages (at least, not until some of their personalities can bring over huge crowds of followers) or in recruitment, especially now that their mobile apps have been neutered.

There are two technical items that are both useful for Telegram: its encrypted messaging feature and the difference between its mobile app and web interfaces. Much has been written about the messaging features between the different social networks (including my own blog post for Avast here). But Telegram does a better job both at protecting its users’ privacy (than Facebook Messenger) and has much better integration into its main social network code.

The second item is how content can be viewed by Telegram users. To get approval for its app on the iTunes and Google Play app stores, Telegram has put in place self-censorship “flags” so that mobile users can’t view the most heinous posts. But all of this content is easily viewed in a web browser. Parler could choose to go this route, if they can get their site consistently running.

As you can see, defining the tech hate stack isn’t a simple process, and evolving as hate groups figure out how to attract viewership.

N.B.: If you want to read more blogs about the intersection with tech and hate, there is this post where I examine the evolution of holocaust deniers and this post on fighting online disinformation and hate speech.