A couple of years ago I wrote about the report that North Korean IT workers were using fake resumes to get jobs as software developers. Once ensconced, they would leverage their position to launch attacks as well as using their salaries to generate hard cash for their government handlers. But a new research report has shown this threat to be even more pernicious, with North Korean digital animators getting jobs working on major motion pictures that will be broadcast on HBO, Amazon, and other outlets.

As I mentioned in my earlier post, this is the ultimate supply chain attack, but the supply is the humans who produce the code, rather than the code itself. The new report is based on a misconfigured cloud server, showing that even North Koreans can make this common programming mistake that is made every day by nerds around the globe. The group working on this server left it wide open for a month, during which time security researchers could download the files placed on this server and figure out the workflows involved.

They learned from the incident how difficult it is for animation studios to vet whether or not their outsourced work ends up on North Korean computers and how these studios might be inadvertently employing North Korean workers. It also demonstrates how hard it can be to have effective sanctions when it comes to our interconnected world.

As you might already know, North Korea doesn’t have very many internet connections by design, because of these sanctions. Typically, an IT shop would have just a couple of connected computers with net access that is carefully monitored by the state. Looks like they need to add “search for unprotected cloud storage buckets” in their monitoring software, just like the rest of us have learned.

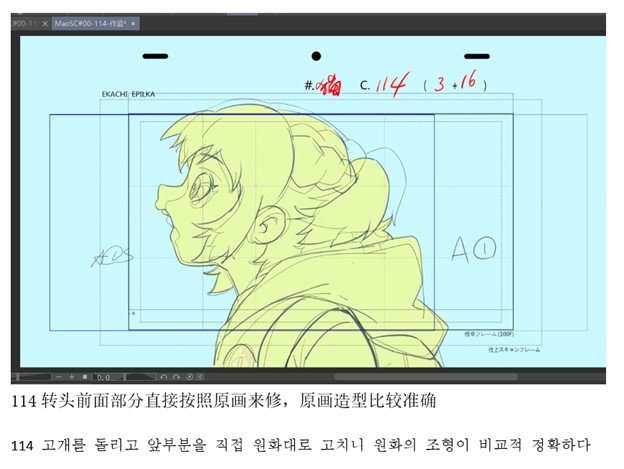

What makes this discovery interesting is how far down the workflow food chain these animators operate. Examining one of the images posted by the researchers, shown below, you can see two text annotations, one in Korean and one in Chinese characters. The conclusion is that this was a translation between two teams working on the project: the hidden Korean team that was a subcontractor for the Chinese team. China is often the safe-mode proxy to hide North Korean origins from Western-based businesses, and Chinese businesses that have been discovered to be these go-betweens are eventually sanctioned by our government.

The researchers found work on a half dozen different animation projects that span the globe of video programming being produced for Japanese, American, and British audiences. Some of these shows aren’t scheduled to run until later this year or next. “There is no evidence to suggest that the companies identified in the images had any knowledge that a part of their project had been subcontracted to North Korean animators. It is likely that the contracting arrangement was several steps downstream from the major producers,” they wrote.

Last October, our government updated its warnings about recognizing potential North Korean IT workers, such as tracking home addresses of the workers to freight forwarding addresses, or where language configurations in software don’t match what the worker is actually speaking. They further recommend any hiring manager do their own background checks of all subcontractors, and not trusting what the staffing vendor supplies, and verifying that any bank checks don’t originate from any money service business. They further recommend preventing any remote desktop sessions and verifying where any company computers are being sent, and for workers to hold up any physical ID cards while they are on camera and show their actual physical location.

I am sure that animation studios aren’t the only ones employing North Koreans. The human employment supply chains can snake several times around the globe, and this means all of us that hire IT — or indeed any specialized talent — need to be on guard about all the component layers.

There aren’t too many people who have become modern models for dolls produced by the American Girl company, let alone women who have had a long volunteer career with the American Red Cross. But Dorinda Nicholson – the

There aren’t too many people who have become modern models for dolls produced by the American Girl company, let alone women who have had a long volunteer career with the American Red Cross. But Dorinda Nicholson – the