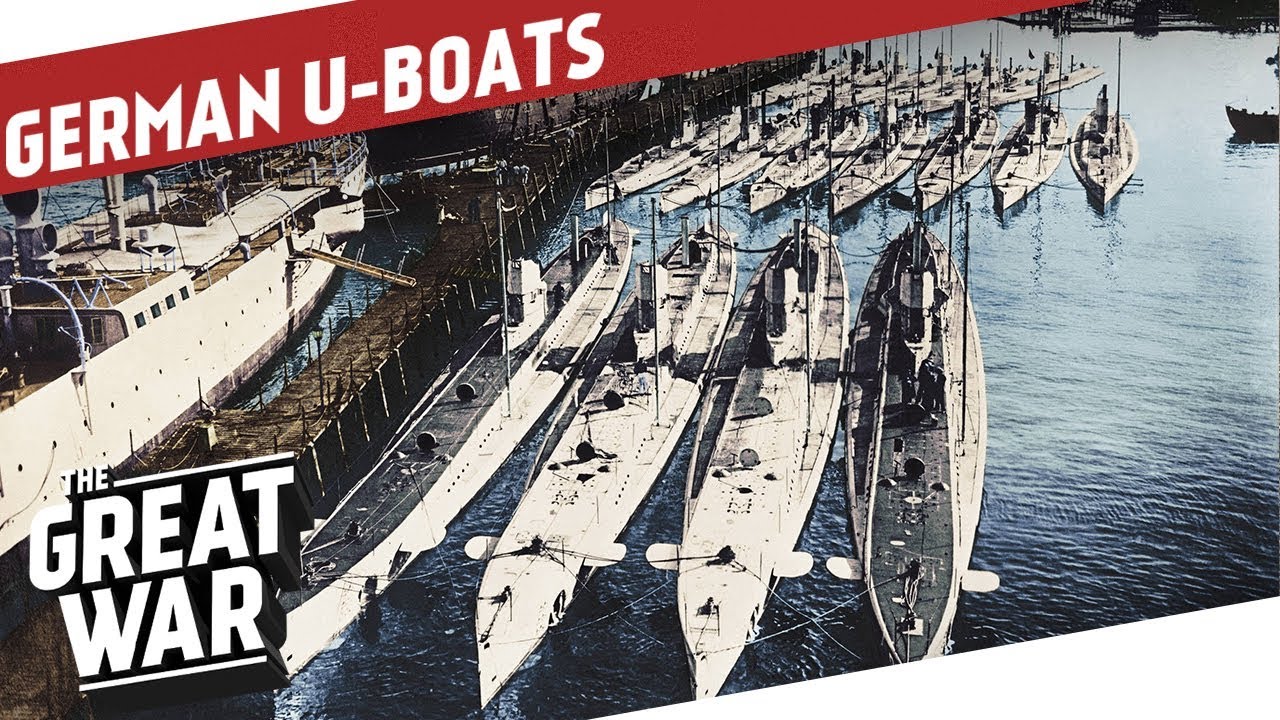

All this chatter about ChatGPT and large language models interests me, but from a slightly different perspective. You see, back in those pre-PC days when I was in grad school at Stanford, I was building mathematical models as part of getting my degree in Operations Research. You might not be familiar with this degree, but basically was applying various mathematical techniques to solving real-world problems. OR got its beginnings trying to find German submarines and aircraft in WWII, and then got popular for all sorts of industrial and commercial applications post-war. As a newly minted math undergrad, the field had a lot of appeal, and at its heart was building the right model.

All this chatter about ChatGPT and large language models interests me, but from a slightly different perspective. You see, back in those pre-PC days when I was in grad school at Stanford, I was building mathematical models as part of getting my degree in Operations Research. You might not be familiar with this degree, but basically was applying various mathematical techniques to solving real-world problems. OR got its beginnings trying to find German submarines and aircraft in WWII, and then got popular for all sorts of industrial and commercial applications post-war. As a newly minted math undergrad, the field had a lot of appeal, and at its heart was building the right model.

Model building may bring up all sorts of memories of putting together plastic replicas of cars and ships and planes that one would buy in hobby stores. But the math models were a lot less tangible and required some careful thinking about the equations you chose and what assumptions you made, especially on the initial data set that you would to train the model.

Does this sound familiar? Yes, but then and now couldn’t be more different.

For my class, I recall the problems that we had to solve each week weren’t easy. One week we had to build a model to figure out which school in Palo Alto we would recommend closing, given declining enrollment across the district, a very touchy subject then and now. Another week we were looking at revising the standards for breast cancer screening: at what age and under what circumstances do you make these recommendations? These problems could take tens of hours to come up with a working (or not) model.

I spoke with Adam Borison, a former Stanford Engineering colleague who was involved in my math modeling class: “The problems we were addressing in the 1970s were dealing with novel situations, and figuring out what to do, rather than what we had to know built around judgment, not around data. Tasks like forecasting natural gas prices. There was a lot of talk about how to structure and draw conclusions from Bayesian belief nets which pre-dated the computing era. These techniques have been around for decades, but the big difference with today’s models is the huge increment in computing power and storage capacity that we have available. That is why today’s models are more data heavy, taking advantage of heuristics.”

Things started to change in the 1990s when Microsoft Excel introduced its “Solver” feature, which allowed you to run linear programming models. This was a big step, because prior to this we had to write the code ourselves, which was a painful and specialized process, and the basic foundation of my grad school classes. (On the Stanford OR faculty when I was there were George Danzig and Gerald Lieberman, the two guys that invented the basic techniques.) My first LP models were written on punched cards, which made them difficult to debug and change. A single typo in our code would prevent our programs from running. Once Excel became the basic building block of modeling, we had other tools such as Tableau that were designed from the ground up for data analysis and visualizations. This was another big step, because sometimes the visualizations showed flaws in your model, or suggested different approaches.

Another step forward with modeling was the era of big data, and one example with the Kaggle data science contests. These have been around for more than a decade and did a lot to stimulate interest in the modeling field. Participants are challenged to build models for a variety of commercial and social causes, such as working on Parkinson’s cures. Call it the gamification of modeling, something that was unthinkable back in the 1970s.

But now we have the rise of the chatbots, which have put math models front and center, for good and for bad. Borison and I are both somewhat hesitant about these kinds of models, because they aren’t necessarily about the analysis of data. Back in my Stanford days, we could fit all of our training data on a single sheet of paper, and that was probably being generous. With cloud storage, you can have a gazillion bytes of data that a model can consume in a matter of milliseconds, but trying to get a feel for that amount of data is tough to do. “Even using ChatGPT, you still have to develop engineering principles for your model,” says Borison.”And that is a very hard problem. The chatbots seem particularly well-suited to the modern fast fail ethos, where a modeler tries to quickly simulate something, and then revise it many times.” But that doesn’t mean that you have to be good at analysis, just making educated guesses or crafting the right prompts. Having a class in the “art of chatbot prompt crafting” doesn’t quite have the same ring to it.

Who knows where we will end up with these latest models? It is certainly a far cry from finding the German subs in the North Atlantic, or optimizing the shortest path for a delivery route, or the other things that OR folks did back in the day.

I always learn from your pieces. Where will we end up? Maybe ask the chatbots. 😉

One reader has told me that this pic is WWI-era U-boats. Smaller and limited time underwater. Also the tender also WW I vintage. Sorry about that.